What is Data Integration? Definition, Methods, and Business Benefits

Quick Answer

Data integration is the process of combining data from multiple disparate sources — databases, ERP systems, cloud applications, spreadsheets — into a unified, consistent view that can be used for analytics and business intelligence. It ensures that decision-makers see a single, coherent picture of business performance rather than fragmented data from disconnected systems.

Data integration is the infrastructure that makes unified analytics possible. When your sales data is in Tally, customer data in CRM, and marketing data in Google Analytics, data integration brings them together so you can ask cross-functional questions and get a complete picture.

What is Data Integration?

Data integration is the technical and organisational practice of combining data from multiple, disparate source systems into a consistent, unified format that serves downstream analytics, reporting, and AI applications.

It involves:

- Connecting to source systems (databases, ERPs, APIs, files)

- Extracting data from those sources

- Transforming data into consistent formats, standards, and structures

- Loading unified data into an analytical destination (data warehouse, BI tool, etc.)

- Maintaining the integration as source systems change over time

Why Data Integration Matters

Modern businesses generate data across dozens of systems:

| Business Function | Data Source |

|---|---|

| Finance / Accounting | Tally, SAP, QuickBooks |

| Sales | CRM (Salesforce, Zoho CRM) |

| Marketing | Google Analytics, Meta Ads |

| HR | Payroll software, HRMS |

| Operations | WMS, logistics platform, ERP |

| Customer Support | Helpdesk (Freshdesk, Zendesk) |

Without integration, each system tells a partial story. With integration, cross-functional questions become answerable:

- "Which marketing campaigns generated the most revenue?" (needs marketing + sales data)

- "Which customers have both high revenue AND growing outstanding receivables?" (needs sales + finance data)

- "How does employee headcount growth correlate with revenue growth?" (needs HR + finance data)

Data Integration Methods

ETL (Extract, Transform, Load)

The traditional approach: extract data from sources, transform it (clean, standardise, join), and load it into a central destination.

- Best for: Batch analytics where data doesn't need to be real-time

- Destination: Data warehouse (see data warehouse)

- Tools: Informatica, Talend, AWS Glue, Python scripts

See ETL explained for the detailed breakdown.

ELT (Extract, Load, Transform)

A modern variant where raw data is loaded first into a cloud data warehouse, then transformed. Leverages the warehouse's computing power for transformation.

- Best for: Large-scale cloud analytics on modern data stacks

- Destination: BigQuery, Snowflake, Redshift

- Tools: Fivetran, Airbyte, dbt

API Integration

Real-time data exchange between systems via APIs. Used when immediate data freshness is needed (e.g., CRM syncing with marketing platform).

- Best for: Operational integrations and real-time syncs

- Examples: Tally-to-BI connector, CRM-to-dashboard sync

Data Virtualisation

Query data in its original location without physically moving it. A virtual layer presents a unified view.

- Best for: When data movement is impractical or high-latency is acceptable

- Tools: Denodo, Trino

Data Integration for Indian Businesses

For Indian businesses, the most common data integration challenge is connecting Tally to analytics platforms. Tally holds the most authoritative business data (financial records, inventory, party data) but has limited native integration capability.

AI analytics platforms like FireAI solve this with a native Tally connector that:

- Establishes a real-time connection without data export

- Transforms Tally's ledger/voucher structure into analytical datasets automatically

- Combines Tally data with other sources (CRM, Excel, APIs) for unified analytics

This is data integration in practice — making Tally's rich data available for dashboards, AI queries, and automated insights without building a custom ETL pipeline.

Data Integration vs Data Management

| Aspect | Data Integration | Data Management |

|---|---|---|

| Focus | Combining data from multiple sources | Governing, storing, and maintaining data |

| Scope | Technical data movement | End-to-end data lifecycle |

| Output | Unified data view | Trustworthy, accessible data assets |

Data integration is a key component within the broader data management framework.

Explore FireAI Workflows

Jump from the concept on this page into the product features and solution paths most relevant to it.

BI Fundamentals

Foundational guides on business intelligence, analytics architecture, self-service BI, and core data concepts.

Ready to Transform Your Business Data?

Experience the power of AI-powered business intelligence. Ask questions, get insights, make better decisions.

Frequently Asked Questions

ETL (Extract, Transform, Load) is one specific method of data integration. Data integration is the broader goal of combining data from multiple sources into a unified view. ETL, ELT, API integration, and data virtualisation are all methods used to achieve data integration.

Analytics on a single system only answers questions about that system. Data integration enables cross-functional analysis — connecting sales data with finance data, or marketing data with customer data — which produces the insights that drive meaningful business decisions.

Modern BI platforms with native Tally connectors (like FireAI) establish a real-time connection to your Tally installation, extract sales, purchase, inventory, and ledger data automatically, transform it into analytical datasets, and make it available for dashboards and AI queries — without any manual data export or ETL development.

Related Questions In This Topic

What is ETL (Extract, Transform, Load)? Process, Tools, and Best Practices

ETL (Extract, Transform, Load) is a data integration process that extracts data from sources, transforms it to match target requirements, and loads it into destination systems. Learn how ETL works, which tools to use, and best practices for ETL pipelines.

What is Data Management? Definition, Framework, and Best Practices

Data management is the practice of collecting, organising, storing, securing, and maintaining data to ensure it is accurate, accessible, and useful for business analytics. Learn what data management includes and how to build a framework for your organisation.

What is a Data Warehouse? Definition, Architecture, and Benefits

A data warehouse is a centralized repository that stores structured data from multiple sources optimized for analytical queries and business intelligence. Learn how data warehouses work, which architecture to use, and how they enable efficient reporting and data-driven decision-making.

What is Data Quality? Dimensions, Measurement, and How to Improve It

Data quality refers to how accurate, complete, consistent, and timely your data is for its intended use. Learn the six dimensions of data quality, how to measure it, and how poor data quality affects business analytics.

Related Guides From Our Blog

Democratizing Data: How AI Analytics Levels the Playing Field for Small Businesses and Freelancers

For decades, data-driven decision making was a luxury that only enterprises could afford. Big companies hired data scientists, purchased expensive BI tools, and built complex data warehouses. In exchange, they received precise insights that guided budgets, strategy, and growth.

RIP, Traditional ETL. Here's What Comes Next.

For decades, **ETL (Extract, Transform, Load)** was the backbone of data engineering. Data engineers built systems that moved raw data from scattered ...

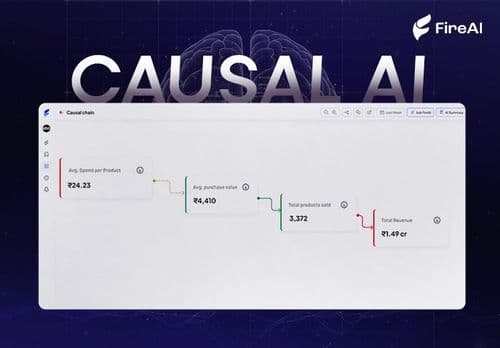

Causal AI Explained: Uncovering the “Why” in Data with Machine Learning

Causal AI reveals not just what will happen, but why — and exactly what changes if you act differently. It turns predictions into high-ROI decisions by uncovering true cause-and-effect in your data.